Why \(1^{\infty} \neq 1\)

A debate that came up recently in some of my work was over the right way to define \(1^\infty\). Many people say that since \(1^n = 1\) for any integer \(n\), then \(1^\infty = 1\) also. That's a very reasonable position, but when we start using more powerful mathematics, it's not good enough any more.

One very good way to approach this is to define a power function \[ f(x,y) = x^{1/y} \] when \(y > 0\) and \(x >0\). We are interested in the value of \[ f(1,0) = 1^{1/0} = 1^{\infty}. \] However, we need a formal framework, as \(\infty\) is not formally a number. Acknowledging Berkeley's criticism of the ambiguity of infinitessials, we employ Weierstrass's idea of limits. \[ f(1,0) = \lim_{x\rightarrow 1, y\rightarrow +0} x^{1/y} \] We can not easily calculate a 2-d limit, but if a unique limit does exist, then for any parameterization \(x(t), y(t)\) where \[ \lim_{t\rightarrow 0} x(t) = 1 \] and \[ \lim_{t\rightarrow 0} y(t) = +0 \] then we must have \[ f(1,0) = \lim_{t\rightarrow 0} x(t)^{1/y(t)} \] Well, if \(x(t) = \exp(t)\) and \(y(t) = t/log(k)\) for some positive constant \(k\). These satisfy our requirements on \(x(t) \rightarrow 1\) and \(y(t) \rightarrow +0\) as long as we restrict \(t\) to it's positive values. Then \begin{align*} f(1,0) &= \lim_{t\rightarrow 0} x(t)^{1/y(t)} \\ &= \lim_{t\rightarrow 0} ( \exp(t) )^{log(k)/t} \\ &= \lim_{t\rightarrow 0} \exp(log(k)) \\ &= k \end{align*} But k is any arbitrary positive constant, so the limit IS NOT uniquely defined. So, a reasonable person can make a sound argument that \(1^\infty = 2\) or any other number, even though, at first pass, this seems ridiculus. This is one of the weird, counter-intuitive problems with \(\infty\) that pops up when you haven't carefully defined what you mean and somebody else is working with a slightly different definition.

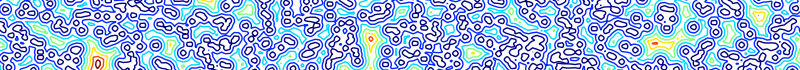

This also provides an example of the Stokes phenomena outside of complex analysis. The Stokes phenomena is a classical applied-math result that observes that asymptotic approximations of functions in the complex plane are often discontinuous. However, I feel that it's a more general phenomena, that's easily overlooked by folks without specific training in asymptotic analysis. In the current example, for a given \(x\) value, the asymptotic limit of \(x^{1/y}\) is discontinuous in \(x\) as \(y\rightarrow 0\). \begin{align*} \lim_{y\rightarrow 0} x^{1/y} = \begin{cases} 0 & \text{if \(0 < x < 1 \)}, \\ 1 & \text{if \(x = 1\)}, \\ \infty & \text{if \(x > 1\)}. \end{cases} \end{align*}

Formalism can be very hand-tying, but sometimes it's the only way to get out of some tight squeezes.