More stochastic than?

In some current research, I need a way to compare distributions and talk about one probability measure that is more stochastic than another. After a little effort (wheel re-invention and directed literature search), and I happened on the very cool idea of 2nd-order stochastic dominance. But here is a different approach, just for kicks.

The basic idea is that given two measures with the same expected value, measures that exhibit more variation around the center are dominated by measures that exhibit less. So singleton \(\delta\)-functions are dominates over all other measures.

Let's say that for two measures \(f\) and \(g\) centered at \(0\), measure \(f\) is dominated by measure \(g\) if and only if

\[ \forall x, \quad \int_{0}^{x} \int_{0}^{v} f(u) d u d v \geq \int_{0}^{x} \int_{0}^{v} g(u) d u d v \]

Now, just looking at this doesn't make much sense to me -- no intuition. So to get a better handle, it's useful to actually have an example we can study. One of the easiest measures where we can apply this is the logistic measure. If the probability distribution is

\[p(x;a)=\frac{1}{a \left(e^{x/2a} + e^{-x/2a}\right)^2}\]

then the lowered CDF is

\[P(x; a) := \int_0^{x} p(x,a) dx = \frac{e^{x/2a} - e^{-x/2a}}{2 (e^{x/2a} + e^{-x/2a})}\]

and the integrated CDF which we need for comparison is

\[I(x; a) := \int_{0}^{x} P(x; a) d x = a \ln\left(\frac{e^{-x/2a} + e^{x/2a}}{2} \right)\]

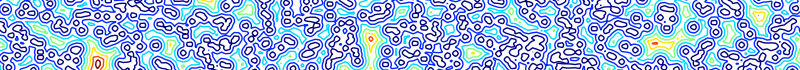

We can plot a few examples (below). And what we see is that as \(a\) increases and the measure gains more variation, the CDF integrated from the mean is lower because there is less probability near the mean. The delta-function which integrates to an absolute value dominates everything. This is provoking, in that it relates scalar probability distributions to a subset of convex functions, and convex functions generally have very useful properties when we consider optimization problems.